Natural State Child

Pushing the Boundaries of Generative AI in Music Video Production

“Natural State Child” is a 1-3 minute music video that explores the emotional journey of moving from a small town to a major city. This project served as a technical test for current generative AI capabilities, as it was produced entirely using artificial intelligence, from the lyrics and musical composition to every single frame of the final visual edit.

Project Specifications

Role: AI Creative Director & Lead Editor

Context: FMX 323: AI: Imaging The Future | University of Tampa

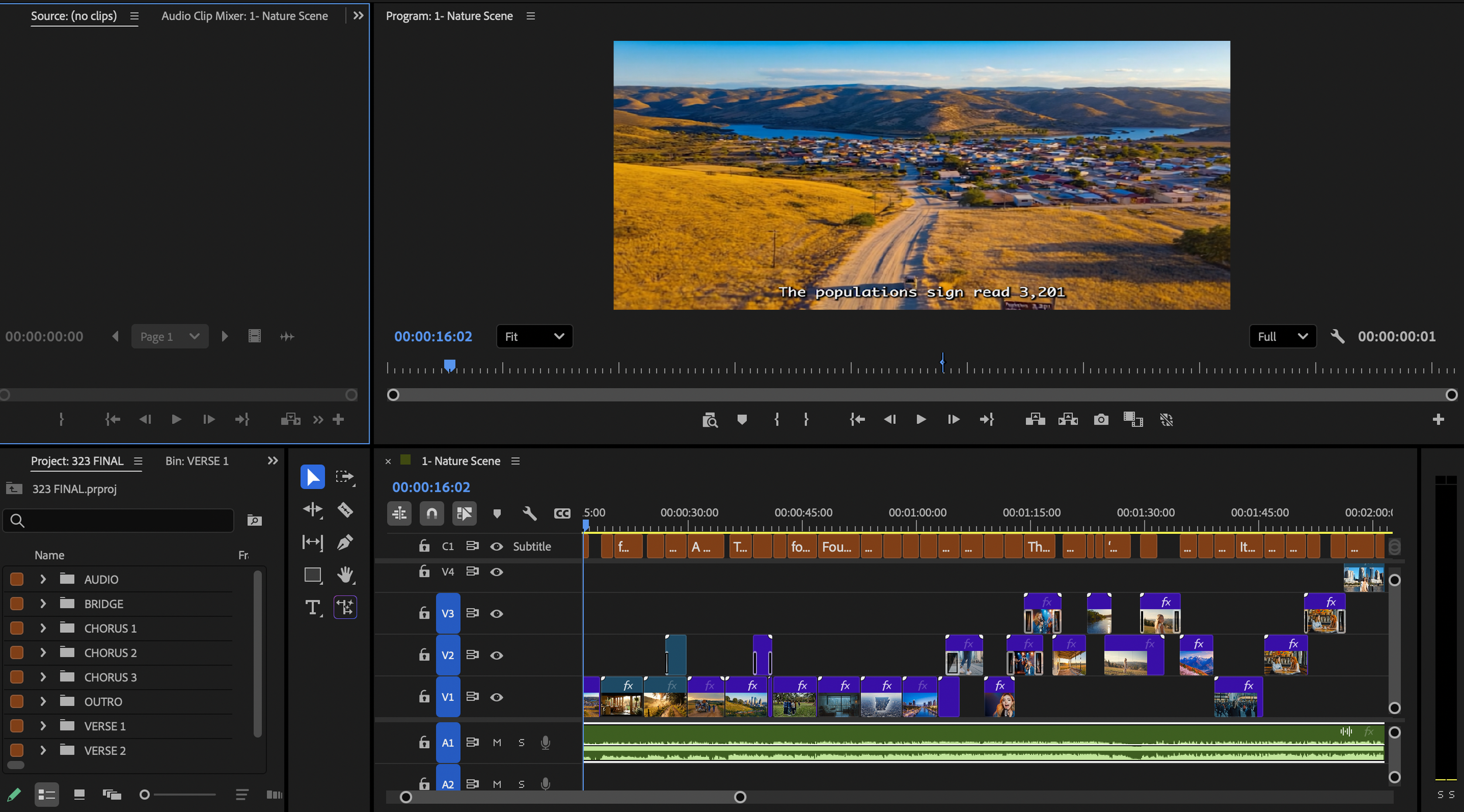

Tech Stack: Google Gemini (Lyrics), Suno AI (Audio), Runway AI & Google Veo 3 (Video), Adobe Premiere Pro

Requirement: 100% AI-generated content (No original footage)

The Vision: A Story of Transition

The project explores the "Natural State" of a person as they navigate a new urban environment, and my personal story of moving from the Natural State, Arkansas, to a big city, Tampa, Florida. To tell this story, I served as a high-level curator, guiding multiple AI engines to maintain a consistent narrative style while exploring the distinct visual personalities of different video-generation platforms.

Key Production Objectives:

Multi-Platform Integration: Blending three different AI models into a single, cohesive visual story.

Lyric-to-Visual Alignment: Prompting video engines to generate specific metaphors that matched the AI-generated lyrics.

Technical Experimentation: Testing the boundaries of AI motion, specifically how it handles complex transitions between rural and city landscapes, and generating different visuals of all styles and topics.

The Process: The AI Workflow

This project required a component-based approach, using each software for its specific strength:

AI Lyric & Music Generation: I started with a specific prompt for song lyrics about moving from a small town to a big city. Once refined, I imported these into Suno AI to generate a high-fidelity vocal and instrumental track.

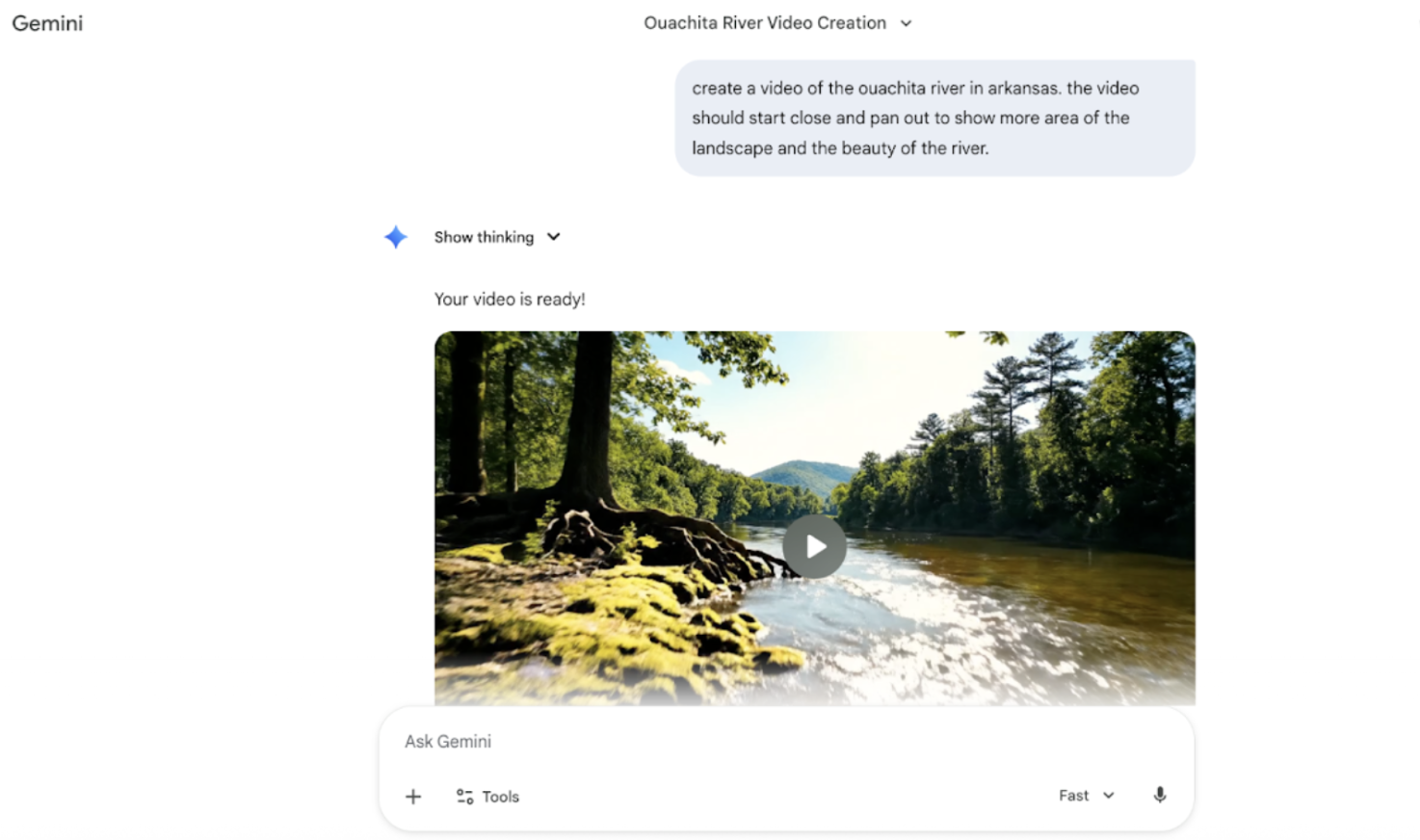

Parallel Video Generation: I used Runway AI and Google Veo 3 simultaneously. This allowed me to compare outputs and pick the platform that best captured certain moods.

Prompt Engineering: Each clip required precise prompting to ensure the "Natural State Child" remained a recognizable character throughout the video, despite being generated by different algorithms.

Final Cinematic Edit: I brought all AI assets into Adobe Premiere Pro, focusing on rhythmic editing and color grading to ensure the final music video felt like a single, unified piece of art.

Reflection: What I Learned

This was my most experimental project yet. It taught me that while AI has few limitations and can generate amazing clips, the real work lies in curation and editing. I had to learn the language of multiple platforms to get them to work together and ensure the final video looked like one seamless cinematic piece. The hardest part was making sure the transition from the small town to the big city felt like one continuous journey rather than a series of random clips. It really showed me that my role as a designer is to be the brain that connects all these different technologies.

Looking Ahead: The Ethical Future of AI

Working on this project made me think a lot about the future of video. I’m really interested in the ethics of AI generation and how we can use these tools responsibly to tell stories that would be too expensive or impossible to film in real life. In the future, I want to explore how we can use AI to make theoretical designs, showing people what a city could look like in fifty years, for example. I want to keep pushing these boundaries while making sure the human story always stays at the center.